By Jennifer K. Chancey

Capital is the buzzword of the day. Does a bank have enough of it right now? Would it be enough to survive a recession or a depression?

One factor that significantly affects banks’ capital positions are the reserves they carry for losses on loans. Current Expected Credit Loss (CECL) is a new methodology, developed by the Financial Accounting Standards Board (FASB), that completely changes the way banks have historically determined these reserves.[1]

CECL was developed as FASB argued that the current incurred loss methodology for bank re-serves didn’t provide the forward-looking information that users of financial statements needed. Incurred loss essentially requires a bank to allocate for a loan loss when a triggering event causes the bank to believe that a borrower will not pay his loan back in full. The rationale was that if banks didn’t act until a triggering event, a credit crisis could already be occurring. The proposed solution was for banks to make a prediction about the probability and severity of a loss when putting a loan on its books based on the life of the loan. To do this, banks have to ask themselves these essential questions:

Based on past experience, do we expect this borrower to fulfill his financial obligation? If not, when do we expect a default to occur? How much will we be unable to recover?

THAT’S RIGHT—banks now have to model for frequency and severity of future contingent losses. And who is better to quantify banks’ risk than actuaries?

The CECL methodology is one of the biggest accounting changes for the banking industry in history, and it doesn’t go into effect until January 1, 2020.[2]

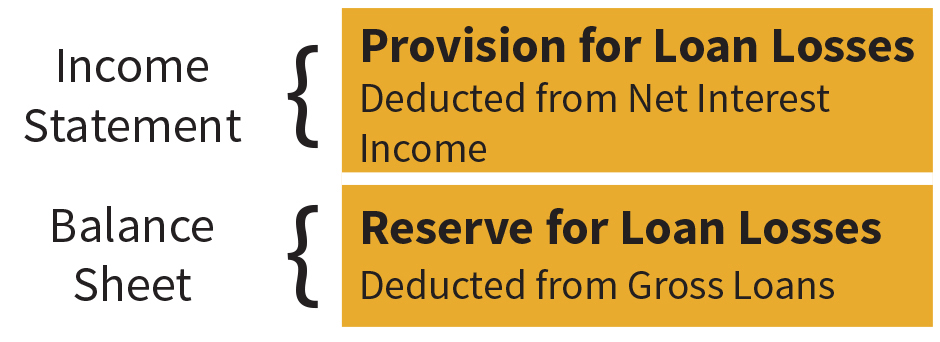

The current Allowance for Loan and Lease Losses (ALLL), or reserve for loan losses, appears on a bank’s balance sheet as a contra asset. A Provision for Loan and Lease Losses (PLLL) appears on the income statement and is deducted from revenues. It is generally added to the ALLL upon determination of the appropriate amount of reserves.

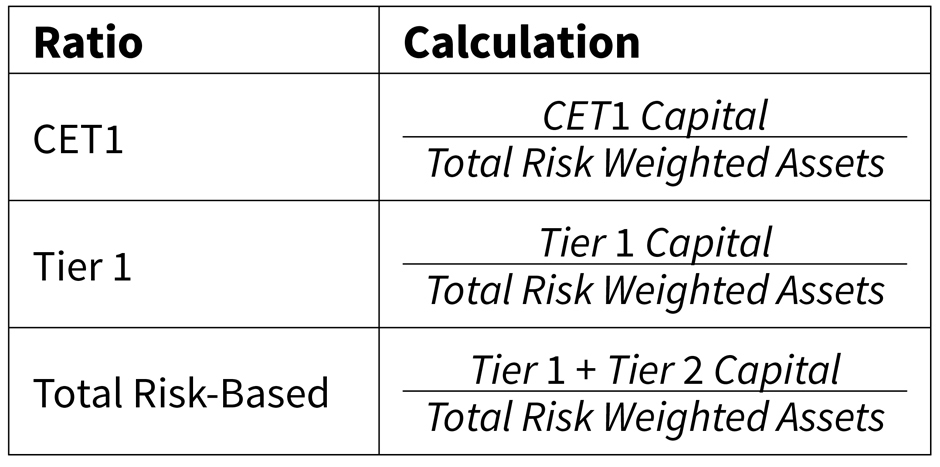

The way that the ALLL and PLLL moves through the financial statements is important because it will ultimately have an impact on a bank’s capital ratios. The ALLL has an impact on the PLLL. A significant increase in PLLL, which is the projected impact for the industry when CECL is implemented, will result in a dramatic increase in ALLL. Because PLLL is deducted from net interest income, total retained earnings will decrease. Retained earnings are a component of the three levels of capital required for the regulatory capital ratios—Common Equity Tier 1 (CET1), Tier 1, and Tier 2 capital.

Tier 1 capital is a function of CET1 capital, and Total Risk-Based capital is a function of Tier 1 capital. It is easy to see how an increase in the ALLL, and therefore a decrease in retained earnings, can travel through the capital ratios, thereby reducing total capital and potentially limiting bank activities, such as dividend payouts or stock repurchases.

Second, the ALLL is included in Tier 2 capital if it is less than a capital limitation.[3] An increase in ALLL will then increase Tier 2 capital. This flows through to total risk-based capital. The decrease in Tier 1 capital would be offset by the increase in Tier 2 capital, and in the case that the ALLL doesn’t increase enough to warrant the capital limitation, total risk-based capital will remain unchanged.

Let’s talk about data requirements. Insurance companies, and even large bank holding companies, love data. This is not necessarily standard across the banking industry. Although complexity requirements are tailored to a bank individually, banks of all sizes will be required to comply with CECL. Most banks might have started electronically collecting detailed loan data years ago, such as loan type, FICO scores, loan-to-value ratios, etc. However, this might not be the case for the smallest banks, which will have to build models for the life of loan with data only going back a few years. In the rare case that a bank has an adequate amount of data, the likelihood that it’s in a centralized database is low. The collection and centralization of the appropriate data has become the focal point of CECL preparation.

Actuaries understand that data will never be perfect. Even though most banks have likely been collecting and retaining digital loan records, they haven’t necessarily been collecting the “right” data—that is to say, the most relevant data. What is the best indicator of default for an auto loan? Is it a FICO score? Maybe the term length of the loan? Not only that, but the type and amount of data collected will determine the granularity of the overall analysis. A loan for an apartment building is going to have different risk characteristics than a loan for a retail location. Yet, in order to analyze these loans as separate pools, banks have to have enough historical and relevant data to develop a reasonable and comprehensible reserve estimate for each type.

FASB did not specify a type of model that should be used for CECL. Banks must determine what methodology works for them based on the type and amount of data they have available. The five most common types of loss methodologies being utilized throughout the industry include loss rate analysis, vintage analysis, transition matrices, discounted cash flow (DCF) models, and regression analysis.

- Loss rate analysis groups together loans that exist on a bank’s balance sheet at a specific moment in time based on certain risk characteristics and tracks losses of that group over a period of time to determine a loss rate for the group. This is also referred to as cohort analysis.

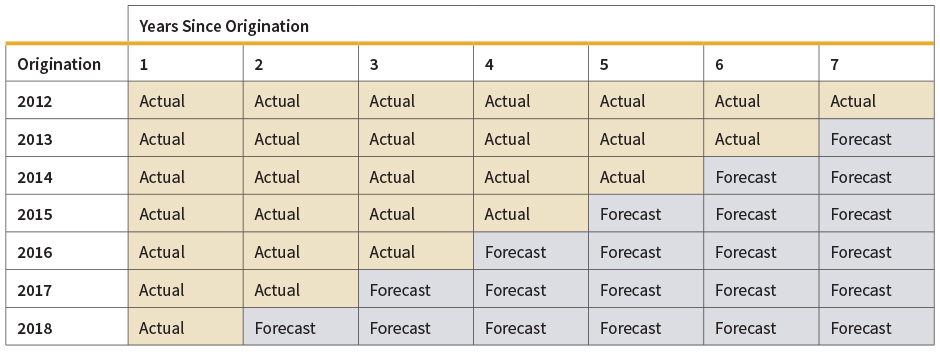

- Vintage analysis is similar to loss rate analysis except that it specifies that loans in the same segment must have been originated around the same time, such as during a certain quarter or certain year.

- Transition matrices determines historical loss rates based on the historical migration of loans to loss, also known as the probability of default (PD). It combines a loss given default (LGD), which is typically a historical average loss size, to create the whole loss picture. This is also referred to as the roll rate method or the PD/LGD model.

- DCF would essentially apply a probability of default to each cash flow in a stream of cash flows and discount them to the current period.

- Regression analysis creates an approximation of loss based on the historical relationship between loss rates and various inputs, such as credit characteristics or macroeconomic variables. It seems that the majority of banks are implementing loss rate or vintage analysis for at least a portion of their loan portfolios.

However, an interesting solution that could be explored is a cohort life table, because the regulation requires that banks calculate expected losses for the life of the loan, which can be a substantial length—such as with 30-year mortgage loans. This approach would have to be developed over time and would not be ready for use with CECL on day one. Life tables have been developed with significant amounts of historical records. This same type of effort would need to be undertaken by the banking industry to produce usable loan life tables. Banks could send scrubbed loan information to a group of actuaries dedicated to the research and collection of such data and table development. This approach would be beneficial for the DCF methodology because it would provide a PD for each year since loan origination.

Another option would be the use of loss triangles, which is similar to the vintage analysis already being used by the industry. This option would be more realistic in terms of both data and organization. The period would be the year or quarter in which a loan becomes nonperforming (that is, a loan that is 90 days past due or not accruing interest). Then each column of age, here being the year or quarter, would include the total charge-offs from the period. The vintage method uses origination year as the beginning period, not a triggering event such as a loan downgrade, and it also includes a triangle of forecasted values.

Not only do they have to analyze individual loan characteristics, banks also need to relate segments of the portfolio to the economy at large. It’s entirely probable that growth of gross domestic product (GDP), or lack thereof, will have an impact on commercial real estate loans. Yet banks will have to create economic forecasts for macroeconomic indicators that they deem important to their portfolios, and these forecasts are required to be reasonable and supportable. According to the Moody’s Analytics whitepaper Economic Scenarios: What’s Reasonable and Supportable?[4] “The phrase ‘reasonable and supportable forecasts’ appears more than 30 times in the [FASB’s] CECL standard.” This means a bank’s CECL forecasting model “should be based on sound, generally accepted economic and statistical theory … and should incorporate inter-relationships and feedback effects among economic variables.” The common approaches suggested include time series analysis, dynamic stochastic general equilibrium models, machine learning/data mining algorithms, and structural models.

After the most probable economic scenario is modeled, banks have to figure out a way to apply it to their portfolios. This application is known as a qualitative adjustment (QA). For example, let’s say that the commercial real estate portfolio is most affected by GDP, and a bank is projecting a moderate recession for the next two to three years. That is, GDP growth is going to be negative for a few quarters, which will result in an increase in both projected default rates and loss severities. What is the quantitative impact of this factor? Is it going to be a one-time spike in defaults and loss severities? What if it’s a gradual build-up followed by a gradual improvement; i.e., mean reversion? The way that banks apply this QA could have a huge impact on the volatility of the reserve.

I recently listened to a presentation by Washington Trust Bank’s CFO, Larry Sorensen. His team applied their CECL models to the Great Recession, and they ended up with very interesting results. The one thing that caught my attention was Sorensen’s interpretation of CECL’s potential consequences. The purpose of CECL is ostensibly to warn banks of an impending downturn so they can adequately prepare. However, if a bank is predicting a downturn, a logical action would be to curtail lending. This would limit risk and save capital. If the recession was any indication, it seems that this would cause liquidity issues that would “accelerate and exacerbate” a recession.[5]

A major aspect of CECL that banks are concerned with is the volatility of reserves. This volatility can come from a variety of places—the model used to project PDs and LGDs, the factors used to create QAs to adjust for economic realities and probabilities, and the method of applying all of the above. The amount of volatility will also vary among banks, and the change in reserves won’t necessarily be directionally consistent. Banks ultimately want to find the right balance between the most accurate estimate and the estimate that is easiest to explain. As actuaries know, there is always a trade-off between a more accurate estimate and projection simplicity. And in most cases, a simpler model is preferred to a more complex model, all else being equal.

The first quarter of 2020 is rapidly approaching. SEC registrants would ideally prefer four quarters of testing alongside current ALLL models to refine their CECL models. The race to have models in place by January 2019 is in full stride. So where does bank readiness currently stand? Leading up to the actual implementation of CECL, regulators will be evaluating the CECL-readiness of the individual banks. They’ll be looking at things such as data collection efforts, the leverage of existing processes or methodologies, necessary system changes, and a capital impact plan.

Where Are the Actuaries?

If you go looking, you won’t find many actuaries in commercial banking; even concrete statistics about how many actuaries are in this field have proved impossible to find. In my career, I’ve only met one other actuary working in the industry, and I think the profession is missing out on a huge opportunity.

Banking entails managing a host of risks, just like insurance. Nothing proves that as much as CECL. ‘Current Expected’ Credit Losses sounds like an oxymoron, but so does a loss modeling structure without an actuary. The following are the vision statements of the American Academy of Actuaries, Society of Actuaries, and the Casualty Actuarial Society, respectively:

- The vision of the American Academy of Actuaries is that financial security systems in the United States be sound and sustainable, and that actuaries be recognized as preeminent experts in risk and financial security.[6]

- Actuaries are highly sought-after professionals who develop and communicate solutions for complex financial issues.[7]

- Actuaries are recognized for their authoritative advice and valued comment wherever there is financial risk and uncertainty.[8]

CECL is a complex financial issue dealing with significant financial risk and uncertainty to the financial security system in the U.S. Yet, the guidance of actuaries is currently absent. While it might be too late for actuaries to lead banks through CECL implementation, there is great opportunity to improve the processes going forward.

JENNIFER K. CHANCEY, MAAA, ASA, is a Treasury analyst with United Bank.

References

[1] CECL also changes the accounting for investment securities and various other assets, but loans are the main focus of the regulation due to the significant impact it will have on the ALLL throughout the industry.

[2] The deadline for SEC registrants is for fiscal years beginning after December 15, 2019, which typically implies the first quarter of 2020. It is January 1, 2022 for non-SEC registrants.

[3] The Tier 2 capital limitation is 1.25% of Total Risk-Adjusted Assets and Off-Balance Sheet Items.

[4] deRitis (2017)

[5] Sorensen (2018), “Financial Crisis Retrospective under CECL…Beyond Implementation to Impact”

[6] http://www.actuary.org/content/vision-mission

[7] https://www.soa.org/about/mission-vision/

[8] https://www.casact.org/about/

| Stress Test for Banks |

|---|

|

Regression analysis is attractive for stress testing because it provides a direct and logical way for the macroeconomic scenarios to be applied to loan data. It is data-intensive for individual loans, but for a loan segment, it is easily applicable. For example, PDs of a commercial real estate portfolio can be estimated the following way:The regulatory agencies—the Office of the Comptroller of the Currency (OCC), the Federal Reserve, and the Federal Deposit Insurance Corporation (FDIC)—release macroeconomic data for three scenarios that is required to be used in DFAST and CCAR to stress a bank’s capital ratios. It would seem to make sense for the current and previous DFAST and CCAR institutions to continue using these methodologies for CECL. The main difference is that they are now responsible for determining the most likely economic scenario to occur for the life of their loan portfolios. However, for smaller community banks that are now subject to CECL, these models are inaccessible due to data, infra-structure, and personnel constraints. PD_CREt = Intercept + PD_CREt–1 + β1 × Real GDPt–2 + β2 × Unemployment Ratet–1 + ε |

T

T